Direct hardware integration

Both apps integrate the Insta360 SDK at a native level, handling camera discovery, pairing, live preview, capture control, and high-speed file transfer over Wi-Fi Direct.

Field workers needed to connect Insta360 cameras to their mobile devices and capture imagery without friction.

Construction sites often have poor or no connectivity, so the apps had to work without a network and sync when available.

Raw 360° footage needed to be processed into 3D models without manual intervention, using AI-powered photogrammetry.

The workforce uses a mix of iOS and Android devices, so both platforms needed full feature parity with native performance.

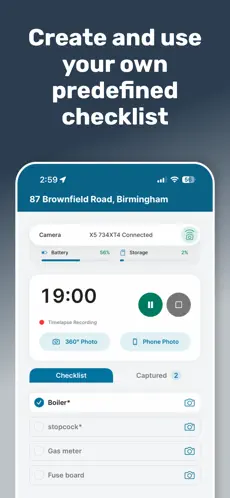

Captures needed to be organised by site, room, and checklist, giving teams a structured approach to documentation.

Teams needed full transparency over the status of every capture. From upload through to 3D model delivery, without chasing updates manually.

AIM Housing needed a reliable, field-ready tool for capturing and managing 360-degree site documentation across their housing developments. The existing process was manual, fragmented, and didn’t scale.

Rather than opting for a cross-platform framework, we built fully native applications for both iOS and Android. This gave us direct, low-level access to the Insta360 camera SDK — critical for reliable Bluetooth/Wi-Fi camera pairing, real-time preview, and high-resolution file transfer.

iOS was built with SwiftUI, while Android used Jetpack Compose with Material 3 design. Both apps follow modern architectural patterns — the Android app uses Voyager for navigation with Koin dependency injection, while the iOS app leverages SwiftUI’s native state management.

The core capture workflow guides field workers through a structured process: select a site, start a capture session, take 360° and standard photos, tag each image to a specific room, and work through a site checklist. The apps track every capture locally, so nothing is lost if the network drops mid-session.

Connectivity on construction sites is unpredictable, so we designed the entire mobile experience around offline-first principles.

On Android, Room database serves as the local cache with a RemoteMediator pattern handling pagination and sync. On iOS, CoreData provides the persistent layer. Both platforms queue uploads in the background and resume automatically when connectivity returns — the Android app uses WorkManager for this, while iOS leverages background URLSession transfers.

Uploads use pre-signed S3 URLs with configurable time-to-live, meaning large 360° image files go directly to cloud storage without routing through the backend. If a URL expires before the upload completes, the app refreshes it and picks up where it left off.

The backend is a Laravel 12 application that orchestrates the entire processing workflow. When raw 360° footage lands in S3, the pipeline kicks off a multi-stage process:

Each processing job is tracked as an immutable record with one or more pipeline runs. The system uses an event-driven architecture — Python workers send structured callbacks to the Laravel backend as they progress through each stage, and every event is stored with a fingerprint hash for idempotent deduplication.

The pipeline includes intelligent failure handling. It distinguishes between retryable failures (such as cloud capacity exhaustion, where it waits and retries after 15 minutes) and non-retryable failures (such as processing errors in the source imagery).

A Vue 3 dashboard built with Inertia.js gives the team real-time visibility into every job — with status tracking, event timelines grouped by pipeline stage, downloadable output artifacts via signed URLs, and the ability to manually retry failed runs.

The cloud infrastructure runs on AWS, with S3 for storage, STS for dynamic credential generation, and Redis-backed queues managed through Laravel Horizon. Pre-signed URLs ensure that mobile devices never need long-lived AWS credentials — each upload gets a scoped, time-limited token.

The processing pipeline runs in containerised workers, with CloudWatch providing log aggregation and Sentry handling error tracking across all three codebases.

Both apps integrate the Insta360 SDK at a native level, handling camera discovery, pairing, live preview, capture control, and high-speed file transfer over Wi-Fi Direct.

Large 360° files upload directly to S3 via pre-signed URLs, with automatic retry and resume on both platforms. Uploads continue even when the app is backgrounded.

The cloud pipeline categorises failures and only retries when there's a reasonable chance of success, avoiding unnecessary compute costs.

Pipeline callbacks use fingerprint-based deduplication, so the system handles network instability and message retries gracefully.

The Android app implements Haversine formula calculations directly in SQLite queries, sorting sites by proximity to the user’s current location.

The Android app uses Gradle build flavours to manage environment (live/dev) and feature toggles (360° photo enabled/disabled), making it straightforward to test and deploy different configurations.

Appoly delivered a complete, production-ready platform comprising two native mobile applications, a cloud processing pipeline, and a monitoring dashboard. The system enables AIM Housing to:

The platform is built to grow with AIM Housing’s portfolio, with the queue-based processing architecture and background sync patterns designed to handle increasing volumes of sites, captures, and processing jobs.